CHICAGO — A breakthrough study from Northwestern Medicine has revealed that Large Language Models (LLMs) can generate more complete and accurate summaries of complex cancer pathology reports than human physicians. The findings, published in the journal JCO Clinical Cancer Informatics, suggest that AI could become an essential tool for clinicians struggling to navigate increasingly data-heavy oncology workflows.

The Study: AI vs. Physicians

Researchers analyzed 94 de-identified pathology reports from lung cancer patients, which included intricate details such as:

- Histopathological findings (microscopic characteristics of the tumor).

- Immunohistochemical results (protein expression levels).

- Molecular and genetic data (critical biomarkers for targeted therapy).

The team tested six open-source models developed by major tech players, including Meta’s Llama 3.1, Google, DeepSeek, and Mistral AI.

Key Findings: Capturing What Humans Miss

The study found that AI-generated summaries were consistently more comprehensive than those written by doctors.

- Superior Detail: The models were particularly effective at capturing molecular and genomic findings, which are vital for determining precise treatment plans.

- Consistency: While doctors may omit details under time pressure or due to “information fatigue,” AI models maintained a high level of semantic similarity to the original reports.

- Top Performers: DeepSeek and Meta’s Llama 3.1 emerged as the strongest performers in the trial.

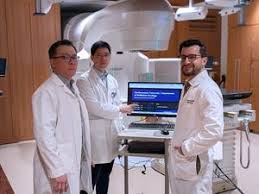

Augmenting, Not Replacing, Clinicians Senior study author Dr. Mohamed Abazeed , a professor at Northwestern University Feinberg School of Medicine, emphasized that the goal is not to replace oncologists. “What we’re seeing is that AI can help ensure critical pathological and genomic details are consistently captured—as a tool to augment clinical decision-making,” Dr. Abazeed stated.

As cancer care becomes more longitudinal—often spanning multiple institutions and years of treatment—the volume of data per patient has exploded. AI provides a potential “fix” to this administrative burden, allowing doctors more time for direct patient care while ensuring no life-saving biomarker is overlooked.

Future Implementation

While the results are promising, the tools are not yet in clinical use. Northwestern is currently developing an application powered by Llama 3.1 designed to generate draft summaries for physician review, which will undergo further rigorous validation before being deployed in hospitals.